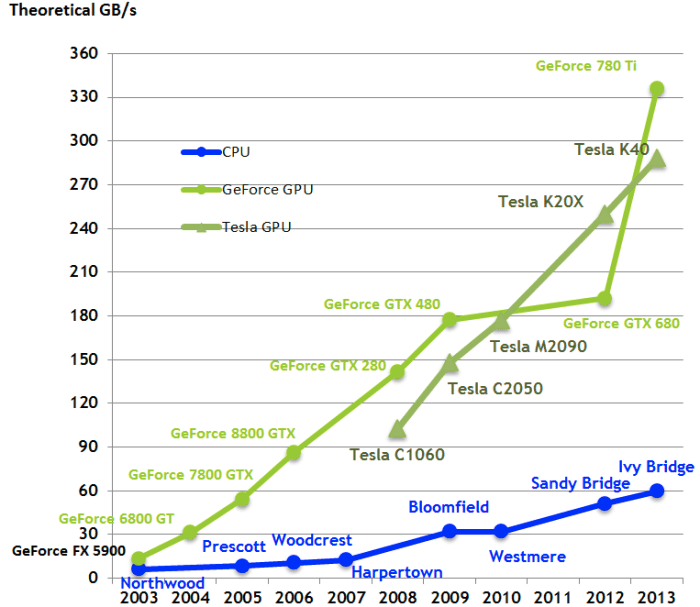

3: Comparison of CPU and GPU FLOPS (left) and memory bandwidth (right).... | Download Scientific Diagram

Throughput of the GPU-offloaded computation: short-range non-bonded... | Download Scientific Diagram

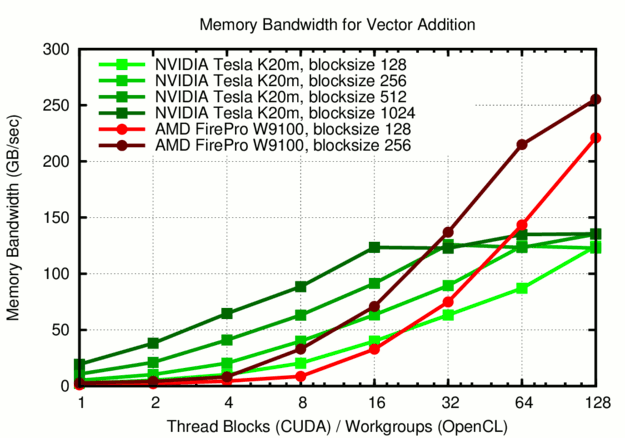

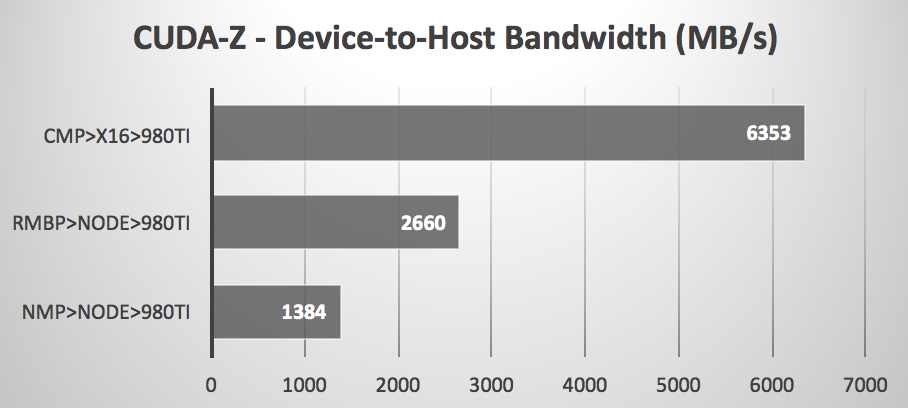

GPUs greatly outperform CPUs in both arithmetic throughput and memory... | Download Scientific Diagram

How Amazon Search achieves low-latency, high-throughput T5 inference with NVIDIA Triton on AWS | AWS Machine Learning Blog

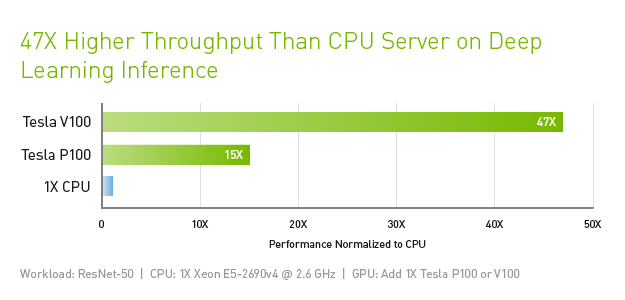

Why are GPUs So Powerful?. Understand the latency vs. throughput… | by Ygor Serpa | Towards Data Science

NVIDIA Ada Lovelace 'GeForce RTX 40' Gaming GPU Detailed: Double The ROPs, Huge L2 Cache & 50% More FP32 Units Than Ampere, 4th Gen Tensor & 3rd Gen RT Cores